Introduction

Features are the key vehicle for value flow in SAFe, yet they are also the source of much confusion amongst those implementing it. The framework specifies that “Each feature includes a Benefit Hypothesis and acceptance criteria, and is sized or split as necessary to be delivered by a single Agile Release Train (ART) in a Program Increment (PI)”.It is obvious that you need a little more information about the feature, and on what feels like countless occasions I have facilitated the definition of a Feature Template with a Product Management group. People in classes ask me for a sample, and of course the only samples I have belong to my clients and aren’t mine to share.

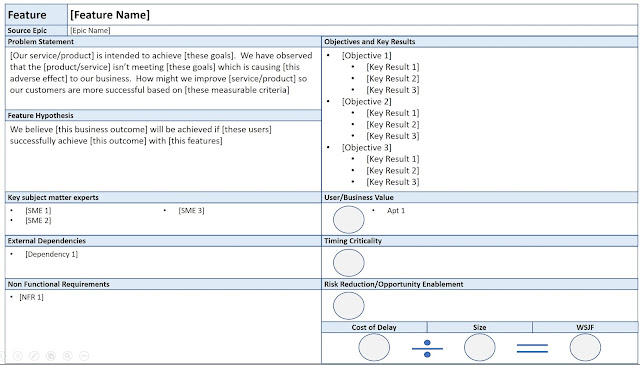

The new emphasis on Lean UX in SAFe 4.5 finally inspired me to put some time into crafting a Feature Template of my own that I could share. The result is a synthesis of recurring patterns I have observed in my coaching, and focuses an “essential components” with guidance on additional information that might be required.

How much detail is needed, and by when?

I divide the refinement lifecycle of the Feature into two phases.

- Prior to WSJF assessment

- Prior to PI Planning

There is a level of detail required for a feature to enable Cost of Delay and sizing assessment. Information is inventory, and we want a lightweight holding pattern until the Feature’s priorities indicate it as needing to be prepared for PI planning.

To this end, my template focuses on taking a canvas approach to supporting WSJF assessment, and I provide some guidance on likely extensions of detail in readiness for PI Planning.

Feature Canvas

Problem Statement

Leveraging the work of Jeff Gothelf in Lean UX, we base the feature on a definition of the problem it is designed to address. Gothelf provides two excellent template statements here:

New Product:

“The current state of the [domain] has focussed primarily on [customer segments, pain points, etc].

What existing products/services fail to address is [this gap]

Our product/service will address this gap by [vision/strategy]

Our initial focus will be [this segment]”

Existing Product:

“Our [service/product] is intended to achieve [these goals].

We have observed that the [product/service] isn’t meeting [these goals] which is causing [this adverse effect] to our business.

How might we improve [service/product] so that our customers are more successful based on [these measurable criteria]?”

Feature Hypothesis

Again, leveraging Gothelf’s work we form a hypothesis as to the impact our Feature might achieve. The template takes the form:

“We believe this [business outcome] will be achieved if [these users] successfully achieve [this user outcome] with [this feature]”.

Objectives and Key Results (OKRs)

Features are intended to provide verifiable impact, this detail is critical to enabling effective Cost of Delay estimation and the post-deployment verification of impact. We want to ensure that quantifiable movement of identified Leading Indicators supports the ongoing evolution of our Product Management strategy.

As previously documented in this post, I have become quite a fan of the OKR model initiated at Intel and popularized by Google and find this a useful discipline in defining feature impacts.

If OKRs seem a little daunting, you would instead list Leading Indicators and expected movements in this section.

Cost of Delay Components

The effectiveness/objectivity of Cost of Delay estimation workshops is heavily driven by the data on the table. The 3 sections for User/Business Value, Timing Criticality and Risk Reduction/Opportunity Enablement provide opportunity to highlight supporting data for the assessment of the three cost of delay components.

Key Subject Matter experts

I rarely if ever work with an ART where Product Management is self-sufficient with their domain expertise. Deliberate identification of and engagement with subject matter experts early in the lifecycle of a feature is critical.

External Dependencies

Nothing brings a feature unstuck faster than unidentified external dependencies. These should be flushed early, and inform prioritization and roadmapping discussions.

Non Functional Requirements

We know that an ART will have a standing set of non functional requirements that applies to all features, but occasionally features come with specific NFR’s.

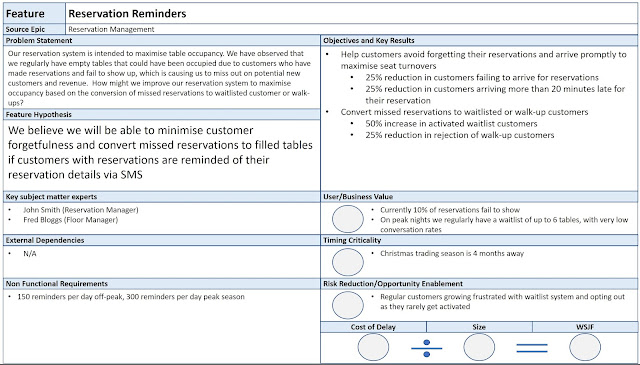

Sample Completed Canvas

It was incredibly difficult to invent a sample feature because my head kept running to real features, but eventually I settled on a fictitious feature for restaurant reservations.

A glimpse at how you might visualise your next WSJF estimation workshop

Detail beyond the Canvas

As a feature is selected as a candidate for an upcoming PI, it triggers the collection of additional framing detail. How much or how little detail is appropriate tends to vary ART by ART and at different stages in their evolution.

At a minimum, it will require acceptance criteria. However, some other things to consider would include:

- User Journeys: Some framing UX exploration is often very useful in preparing a Feature, and makes a great support to teams during PI planning.

- Architectural Impact Assessment: Some form of deliberate architectural consideration of the potential impact of the feature is critical in most complex environments. It should rarely be more than a page – I find a common approach is one to two paragraphs of text accompanied by a high level sequence diagram identifying expected interactions between architectural layers.

- Change Management Impacts: How do we get from deployed software to realised value? Who will need training? Are Work Instructions required?

Tuning your Template

Virtually every ART I have worked with has oscillated between “too much up-front information” and “not enough up-front information”. You want to know enough to enable teams and product owners to effectively plan and execute iteratively, yet not so much that you constrain the opportunity for teams and product owners to innovate and take ownership of their interpretation.

Every PI is a learning opportunity. Take stock a week after PI planning and look at both the information you wished you’d had, and your observations as to the value proposition of the information you had provided.

Then, take stock again late in the PI. Look at how the features played out over the PI, and the moments you wish you could have avoided 😊

Who completes the Canvas/Template?

The Product Manager is the content authority at the Program Backlog level, hence they are the ultimate owners. However, one of the nice influences Lean UX has brought to SAFe 4.5 is a real emphasis of “collaborative design”. In avoiding the waste of knowledge handoff, the best people to work through the majority of the detail (including preparing the canvas itself) are the product owners and teams likely to be implementing the feature.

Awesome work Mark! We have created some for clients too that we can't share. :-(

ReplyDeleteThanks for sharing Mark - these are really useful.

ReplyDeleteI really like the hypothesis statements for features and think that this is a major enhancement in SAFE 4.5. I wrote a blog post about it here: http://runningmann.co.za/2017/09/25/the-power-of-feature-hypotheses/ that you might be interested in.

These are awesome Mark. Thanks for sharing

ReplyDeleteThanks for sharing your experience on this area with the community Mark. Feature Templates are a very common requirement for Agile practitioners, maybe you can persuade the SAFe community to include an artefact like this in the framework.

ReplyDeleteInformation was good,i like your post. Looking forward for more on this topic. product management

ReplyDeleteThis is great! Do you have the template format available so we don't have to replicate?

ReplyDeletegreat stuff, how would you differentiate this from SAFe Epics

ReplyDeleteOther problems include editing resistance, lack of or significantly delayed communications and lack of professionalism. website

ReplyDeleteI discovered your blog site site on the search engines and check several of your early posts. Always maintain up the very good operate. I recently additional increase Rss to my MSN News Reader. Looking for toward reading much more on your part later on!… apple tablet mockup

ReplyDeleteI genuinely treasure your work , Great post. ipad mockups

ReplyDeleteWhen I originally commented I clicked the -Notify me when new surveys are added- checkbox and from now on every time a comment is added I buy four emails with similar comment. Perhaps there is any way you are able to eliminate me from that service? Thanks! web design company san francisco

ReplyDeleteGood post and a nice summation of the problem. My only problem with the analysis is given that much of the population joined the chorus of deregulatory mythology, given vested interest is inclined toward perpetuation of the current system and given a lack of a popular cheerleader for your arguments, I’m not seeing much in the way of change. web design company san francisco

ReplyDeleteCutting up pictures, reestablishing of old photos, working around with watermarks, and other progressed tips and deceives can likewise be found in a high caliber and significant Adobe photograph shop instructional exercise.Professional graphic design

ReplyDeleteVery efficiently written information. It will be beneficial to anybody who utilizes it, including me. Keep up the good work. For sure i will check out more posts. This site seems to get a good amount of visitors. essay writing service

ReplyDeleteTheir willingness to partner with us has been great.

ReplyDeleteUI design agency

Hey enormous stuff or pleasant information you are offering here.

ReplyDeletebest branding agencies

Nice blog Mark

ReplyDeleteHow can I get a downloadable version of this Canvas?

I think you can make video about it. If you want to promote your channel on youtube you can buy youtube subscribers for it

ReplyDeleteThanks for this incredible information. I think you could make a video about feauture templates for sale and post it on Instagram. By the way if you want to get more followers for your profile, you can repeatly use the help of https://viplikes.net/buy-instagram-followers to quickly boost their number.

ReplyDeleteSocialize Club- Buy Facebook live stream views cheap in price but high in quality.We provide you with 100% Real Facebook live stream views delivered at your facebook live video instantly.

ReplyDeletePackaging should function as a barrier to air, water vapor, dust, and other contaminants. Because food items might be in liquid form, they must be leak-proof to avoid loss during transportation. BOPP film supplier

ReplyDeletepercetakan buku online di jakarta

ReplyDeletepercetakan murah jakarta

percetakan online jakarta

percetakan Jakarta timur

cetak murah jakarta

cetak online jakarta

digital printing jakarta

print murah jakarta

jasa print murah

cetak buku murah di jakarta timur

I use the aforementioned off-page SEO techniques and am currently seeing the results of my labors with page 1 Google search engine rankings. For example, Deanna Hibbs, a full-time working mother, discovered internet marketing while searching for a work schedule other than 9 to 5.SEO philadelphia

ReplyDeletethank you for share an informative blog article with us.

ReplyDeletekitimesblog

Essential phases

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteProduk Elektronik Terbaik Saat Ini

ReplyDeleteBahan dapur memasak ikan

Bumbu dapur ayam goreng

Menjual bahan dapur

Shin Tae Yong coah terbaik sepanjang masa

Justin hubner bek andalan timnas indonesia

Nathan Tjoe a on bek bule berdarah indonesia yang membela timnas

Jualan tanpa modal hanya dengan perlengkapan dapur